According to a 2024 survey conducted by Gartner, fewer than 30 percent of organizations across regulated industries report having a complete, current inventory of their enterprise data assets — and in life sciences, where data spans clinical trial records, regulatory submissions, manufacturing batch records, pharmacovigilance reports, and commercial datasets, that number is likely optimistic. The implications are not merely operational. As pharmaceutical, biotechnology, and medical device organizations accelerate their investments in artificial intelligence, they are discovering a foundational gap that no model, no platform, and no vendor can paper over: the absence of systematic data classification. Without knowing what data exists, where it lives, how sensitive it is, and what purposes it may legitimately serve, AI systems cannot be deployed responsibly or effectively. This is not a theoretical concern. It is the practical reality confronting data and technology leaders across the industry today.

The urgency of this problem has been dramatically amplified by the maturation of generative AI and, more recently, by the emergence of agentic AI systems — autonomous software agents capable of not merely retrieving and summarizing information, but of taking actions: drafting regulatory submissions, initiating procurement workflows, modifying records in validated systems, or triggering downstream processes. Where first-generation AI search tools required only that data be findable and appropriately access-controlled, agentic AI systems require something far more sophisticated: a data estate with rich, machine-readable metadata describing not just sensitivity, but intent, provenance, lineage, and workflow eligibility. Data classification, in this context, is not a nice-to-have. It is the prerequisite infrastructure upon which every meaningful AI capability in life sciences will be built or will fail to be built.

Watch: Why data classification is the most important AI investment life sciences companies can make right now.

The Data Classification Imperative in Life Sciences

To understand why data classification is particularly consequential in life sciences, it is necessary to appreciate the unusual complexity of the data landscape these organizations must manage. A mid-sized pharmaceutical company operating across clinical, regulatory, manufacturing, and commercial functions may maintain data in dozens of distinct systems — electronic data capture platforms, laboratory information management systems, document management platforms, ERP systems, CRM tools, safety databases, and regulatory information management systems — each with its own data model, access control paradigm, and retention requirement. Unlike industries where data tends to be more homogeneous, life sciences organizations deal with what can accurately be described as multi-modal, multi-jurisdictional, multi-lifecycle data: structured and unstructured, validated and informal, globally distributed and locally governed.

Regulatory frameworks add substantial additional complexity. GxP — the family of “Good Practice” regulations governing pharmaceutical manufacturing, clinical research, and distribution — imposes specific requirements on data integrity, traceability, and access control that are codified in instruments including FDA 21 CFR Part 11, which establishes the criteria under which electronic records and electronic signatures are considered trustworthy and equivalent to paper records. ICH E6(R3), the revised guideline on Good Clinical Practice published in final form in 2023, places explicit expectations on data governance, risk-proportionate oversight, and the management of trial master files. These are not merely compliance checkboxes. They define the conditions under which data may be used for regulatory decision-making — and by extension, the conditions under which AI systems operating on that data may be considered fit for purpose.

The concept of data governance in this context encompasses far more than access control or retention scheduling. It requires that organizations be able to assert, with evidence, what each data asset contains, who may access it, under what circumstances it may be processed, and how it traces back to its origin. A clinical trial dataset cannot simply be “available” — it must be classified by study phase, sponsor identity, jurisdiction, subject sensitivity, and regulatory submission status. A batch manufacturing record cannot simply be “stored” — it must be tagged with GxP applicability, product identity, audit trail completeness, and validation status. These classification attributes are not incidental metadata. They are the machine-readable signals that allow AI systems to make responsible, compliant decisions about what to retrieve, summarize, extract, or act upon.

The scale of the challenge is significant. McKinsey’s 2023 analysis of data readiness in the pharmaceutical sector found that organizations typically underestimate the volume of unstructured content requiring classification by a factor of three to five, and that legacy document repositories — SharePoint environments, file shares, legacy Documentum installations — are rarely inventoried with sufficient completeness to support AI deployment. Gartner has estimated that through 2026, more than 80 percent of AI projects that fail will do so not because of model limitations, but because of inadequate data quality and data governance infrastructure. In life sciences, where the stakes of data error include patient safety, regulatory action, and product recall, the cost of getting this wrong is not merely financial.

Why Most Organizations Get Data Classification Wrong

Despite widespread recognition that data classification matters, most life sciences organizations approach the problem in ways that are structurally insufficient for what AI actually requires. The most common failure mode is treating data classification as a one-time project rather than a continuous operational discipline. Organizations commission a data inventory, apply sensitivity labels to a portion of their document estate, declare victory, and move on — only to find, eighteen months later, that new content has accumulated without classification, that system migrations have disrupted metadata inheritance, and that the classification taxonomy designed for 2022’s compliance requirements does not map cleanly onto 2025’s AI use cases.

A second, related failure is the conflation of data classification with data sensitivity labeling. Sensitivity labeling — assigning designations such as Public, Internal, Confidential, or Restricted — is a necessary component of data classification, but it is far from sufficient. Sensitivity labels tell an AI system whether a document can be accessed. They say nothing about what the document contains, what it may be used for, whether it is a primary source or a derivative work, whether its conclusions have been superseded, or whether acting upon its contents requires human review. An organization that has meticulously applied sensitivity labels across its Microsoft 365 environment has completed perhaps 20 percent of the classification work that agentic AI actually requires.

The most consequential mistake, however — and the one that will create the widest competitive gaps over the next five years — is what can be called the “search-only trap.” Many organizations are currently building their AI strategy around retrieval-augmented generation search tools: systems like Microsoft 365 Copilot, Glean, or similar enterprise search platforms that allow employees to query internal knowledge bases using natural language. This is a legitimate and valuable use case, and it does require data classification work — specifically, the sensitivity labeling and access control enforcement that ensures the search system respects data boundaries. But organizations that stop there are preparing for the AI capabilities of 2024, not the AI capabilities of 2026 and beyond.

Agentic AI systems — which can autonomously navigate multi-step workflows, take actions in enterprise systems, and coordinate across tools without continuous human direction — require classification infrastructure that is qualitatively different from what search requires. Where search needs to know “is this document accessible to this user?”, an agent needs to know “is this document appropriate to summarize and include in a regulatory submission draft?”, “is this record one the agent may modify, or only read?”, “has this document been superseded by a later version?”, “does acting on this data require a wet signature or electronic approval?” These are not sensitivity questions. They are semantic, procedural, and governance questions — and answering them requires a classification framework designed specifically for agentic use cases.

The Two-Horizon Framework for Data Classification

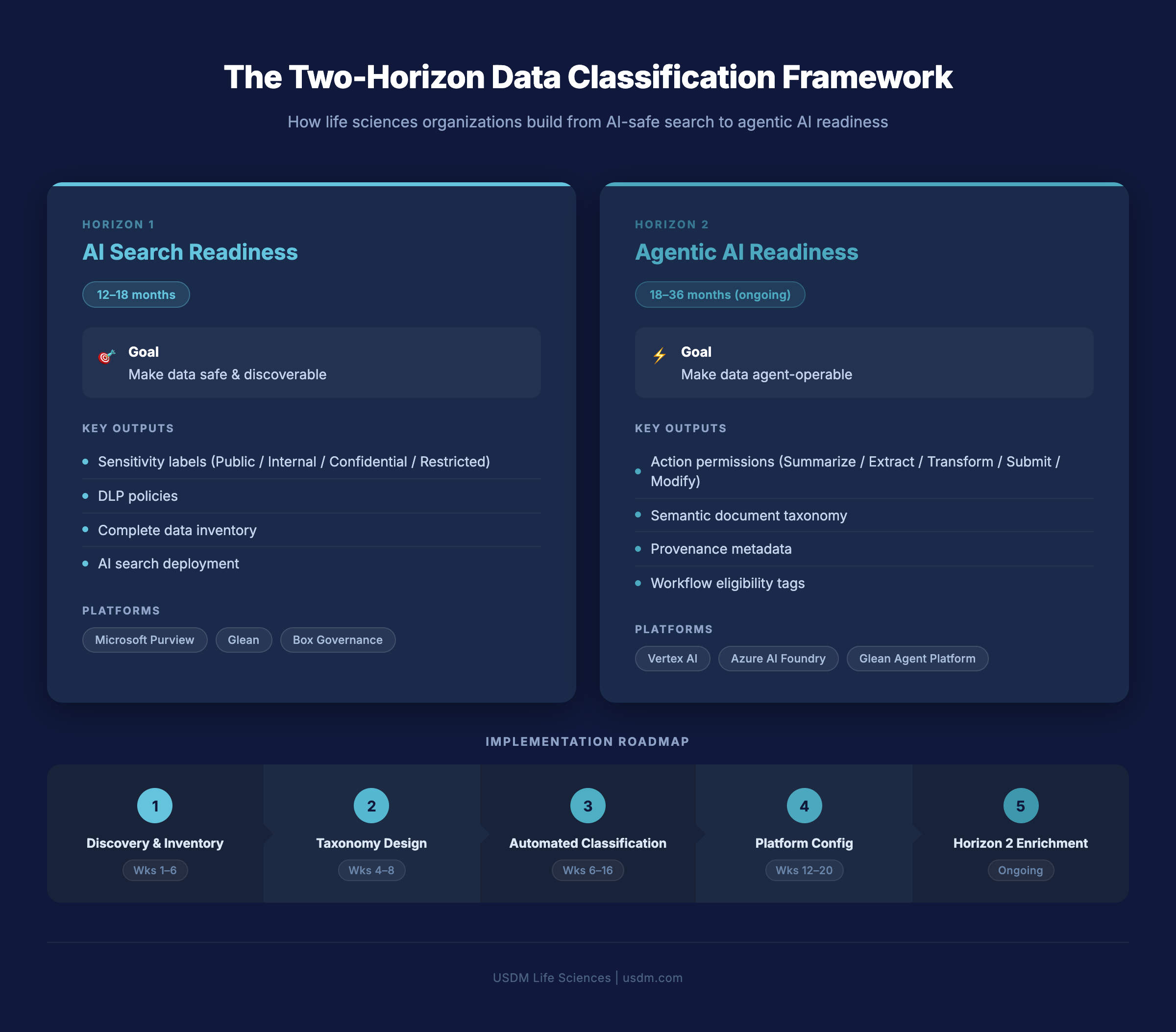

To navigate the gap between where most organizations are today and where they need to be to deploy agentic AI responsibly, it is useful to think in terms of two distinct horizons of data classification maturity. These horizons are not sequential in the sense that Horizon 1 must be fully complete before Horizon 2 begins — they overlap in practice, and early Horizon 2 work can and should begin while Horizon 1 is still being executed. But they represent fundamentally different levels of metadata richness, organizational capability, and AI system sophistication.

Horizon 1 — AI Search Readiness (12–18 Months)

Horizon 1 data classification is focused on the foundational infrastructure required to deploy AI-powered search and knowledge retrieval tools safely and effectively across a life sciences organization. It is the minimum viable classification state for responsible AI deployment, and for most organizations it represents a significant but achievable body of work that can be substantially completed within 12 to 18 months of serious organizational commitment.

The core deliverables of Horizon 1 include a complete data inventory across all major systems — document management platforms, collaboration environments, data warehouses, and content repositories — with enough granularity to establish what exists, where it lives, and who currently has access to it. For many organizations, this inventory work alone surfaces significant surprises: shadow data stores, orphaned repositories from legacy systems, content that has never been formally classified or access-controlled, and significant duplication across platforms.

Sensitivity labeling is the second major deliverable of Horizon 1. A four-tier taxonomy — Public, Internal, Confidential, and Restricted — is sufficient for most life sciences organizations at this horizon, though the specific criteria for each tier must be defined with reference to both regulatory requirements and business risk. GxP-applicable records, for example, will typically warrant Restricted or Confidential classification by default given their regulatory significance, while general corporate communications may appropriately sit at the Internal tier. Once labels are defined, automated classification tools — Microsoft Purview is the most widely deployed platform for this purpose in Microsoft-centric environments — can be configured to apply labels based on content inspection, location, and user-defined rules.

Data loss prevention policies and access control enforcement are the third pillar of Horizon 1. Classification without enforcement is annotation without consequence; it does not prevent misuse, it merely documents it. DLP policies must be configured to prevent classified content from being exfiltrated, shared inappropriately, or ingested by AI systems that lack the appropriate clearance. For life sciences organizations operating in validated environments, this enforcement layer must itself be subject to validation and change control.

With these three elements in place — inventory, labeling, and enforcement — organizations are positioned to deploy AI search tools including Microsoft 365 Copilot, Veeva Vault’s native AI capabilities, and third-party enterprise search platforms like Glean. These tools can retrieve, synthesize, and surface information from the classified data estate with confidence that sensitivity boundaries are being respected and that access is controlled. This is a genuinely valuable capability, and for many organizations it represents the first tangible return on their data classification investment.

Horizon 2 — Agentic AI Readiness (18–36 Months, Ongoing)

Horizon 2 data classification is where the work becomes both significantly more complex and significantly more consequential. Where Horizon 1 is concerned with whether data can be found and accessed, Horizon 2 is concerned with whether data can be acted upon — and under what conditions, by what agents, with what level of human oversight, and with what audit trail.

The foundational concept of Horizon 2 is action permissions: a classification dimension that specifies, for each document type or content category, what operations an AI agent may perform. A preliminary safety signal, for example, might be classified as permitting Summarize and Extract operations but not Submit or Modify — meaning an agent could condense it and pull structured data from it, but could not file it with a regulatory authority or alter its contents. A validated manufacturing procedure might permit Read and Summarize but require human confirmation before any Extract operation that feeds a downstream process. These action permission tags are not native features of any current enterprise content management platform; they represent a classification layer that organizations must design and implement deliberately.

Semantic document taxonomy is the second Horizon 2 deliverable. Where sensitivity labels answer “how sensitive is this?”, semantic taxonomy answers “what is this?” — distinguishing between a clinical study report, a regulatory submission cover letter, a pharmacovigilance case narrative, a quality system SOP, and a commercial contract. This taxonomy must be rich enough to be machine-readable and specific enough to drive agent decision-making. A well-designed semantic taxonomy for a pharmaceutical company might include 150 to 300 distinct document types, each with associated metadata schemas, ownership rules, and lifecycle states.

Provenance and lineage metadata — tracking where data came from, how it was derived, and what transformations it has undergone — is the third critical Horizon 2 element. For AI agents operating in regulated environments, the ability to assert data provenance is not optional. If an agent generates a draft regulatory response, a human reviewer needs to be able to trace every factual claim in that response back to its source document, with confidence that the source was a validated, authoritative record rather than an informal internal communication or a superseded version of a document. Without lineage metadata embedded in the classification framework, this traceability is impossible to provide systematically.

Workflow eligibility tags represent the fourth Horizon 2 dimension: metadata that describes which workflows a document may participate in, under what conditions, and with what approval requirements. A document tagged as “eligible for autonomous submission workflow” signals to an agent that it may be included in a regulatory filing without step-by-step human review of its inclusion. A document tagged as “requires QA review before workflow inclusion” signals the opposite. These tags operationalize governance at machine speed — which is precisely what agentic AI requires.

Horizon 2 is where competitive advantage lives. Organizations that complete this classification work will be able to deploy AI agents that can autonomously draft regulatory submissions, manage end-to-end pharmacovigilance workflows, coordinate clinical supply chain logistics, and handle complex multi-system document management tasks. Organizations that have only completed Horizon 1 will be able to search their data estate more efficiently — a meaningful but far more modest advantage. The gap between these two states is not a gap in AI capability. It is a gap in data infrastructure, and it is created entirely by decisions made years before the agents are deployed.

The Phased Implementation Path

Translating the two-horizon framework into an executable implementation plan requires a phased approach that builds capability progressively while delivering incremental value at each stage. The following phases represent a practical roadmap developed from implementation experience across life sciences organizations of varying scale and data maturity.

Phase 1: Discovery and Inventory

The discovery phase establishes the factual baseline that all subsequent classification work depends on. This means deploying automated scanning tools — Microsoft Purview’s data map capabilities, Varonis, or similar platforms — across all major content repositories, collaboration environments, and structured data systems to generate a comprehensive inventory of what exists. The output of this phase is not simply a list of systems; it is a map of data volumes, content types, age distributions, access patterns, and existing metadata completeness that allows the classification taxonomy design team to work from evidence rather than assumption. For most life sciences organizations, the discovery phase surfaces between 20 and 40 percent more content volume than was previously estimated, with the excess concentrated in legacy file shares, archived collaboration sites, and shadow repositories accumulated through mergers and acquisitions.

Phase 2: Taxonomy Design

Taxonomy design translates the inventory findings into a structured classification framework: the sensitivity tiers, semantic document types, action permission categories, and workflow eligibility definitions that will govern how content is labeled and how AI systems will interact with it. This phase must involve cross-functional stakeholders — regulatory affairs, quality assurance, legal, IT, and business line representatives — because the taxonomy must be both technically implementable and operationally meaningful to the people who create and use the content. A taxonomy designed solely by IT will fail to capture the regulatory nuances that make it trustworthy; a taxonomy designed solely by regulatory affairs will fail to accommodate the technical constraints of automated classification at scale. The deliverable of this phase is a documented taxonomy with clear definitions, examples, and classification decision trees that can be used to train both automated tools and human reviewers.

Phase 3: Automated Classification

With taxonomy in hand, the automated classification phase deploys machine learning-based content classification tools to apply labels at scale across the inventoried data estate. For sensitivity labeling in Microsoft environments, Purview’s trainable classifiers and built-in sensitive information types provide a strong starting point, supplemented by custom classifiers trained on life sciences-specific content patterns. Semantic classification — assigning document type tags from the taxonomy — typically requires a combination of rule-based logic for well-structured document types (SOPs, protocols, and submission documents often have predictable formatting and metadata) and ML-based classification for less structured content. Human review queues should be established for low-confidence classifications, with subject matter expert review driving continuous improvement of classifier accuracy. Target accuracy for automated classification at this phase should be 85 to 90 percent, with human review handling the remainder.

Phase 4: Platform Configuration

Platform configuration translates the classification framework into enforced policy. This includes configuring DLP rules in Microsoft Purview or equivalent platforms, updating access control policies in document management systems, integrating classification metadata with AI search platforms, and establishing the monitoring and alerting infrastructure that ensures ongoing policy compliance. For validated GxP systems, this phase must include formal validation activities — IQ, OQ, and PQ protocols for classification-related system configurations — which adds time and rigor but is non-negotiable for regulatory compliance. The output of this phase is a production-grade, enforced classification environment capable of supporting Horizon 1 AI search deployment with confidence.

Phase 5: Horizon 2 Enrichment

Horizon 2 enrichment is not a project with a defined end date — it is an ongoing operational discipline that progressively deepens the classification metadata layer to support increasingly sophisticated agentic AI capabilities. This phase introduces action permission tagging, provenance metadata enrichment, semantic taxonomy expansion, and workflow eligibility classification, typically beginning with the document types and workflows where agentic AI offers the highest business value: regulatory submission workflows, pharmacovigilance case processing, and clinical document management. Organizations that treat Horizon 2 enrichment as a continuous program — with dedicated resources, clear ownership, and regular review cycles — will compound their classification investments into expanding AI capability over time. Organizations that treat it as a future project to be started “when we’re ready” will find that readiness never arrives on its own.

Platform Considerations for Life Sciences

Selecting the right technology platforms for data classification is consequential, but it is important to recognize that no single platform addresses the full scope of what the two-horizon framework requires. Life sciences organizations should expect to operate a portfolio of complementary tools, integrated through APIs and shared metadata standards, rather than a single monolithic solution.

Microsoft Purview is the most widely adopted platform for sensitivity labeling and data loss prevention in enterprise environments. Its deep integration with Microsoft 365, SharePoint, Teams, and Azure Data Services makes it the natural choice for organizations heavily invested in the Microsoft ecosystem. Purview’s data map capabilities support automated discovery and classification across both cloud and on-premises repositories, and its trainable classifier framework allows organizations to build life sciences-specific classification models without requiring data science expertise. For Horizon 1 work in Microsoft environments, Purview is effectively the default starting point.

Veeva Vault is the dominant document management platform for GxP-regulated content in the pharmaceutical and biotech sectors. Vault’s native document type taxonomy — covering clinical, quality, regulatory, and safety document categories — provides a strong foundation for semantic classification, and Veeva’s continued investment in AI capabilities means that classification metadata stored in Vault is increasingly accessible to both Vault’s own AI features and third-party AI platforms. Organizations using Vault should ensure that their classification taxonomy design is aligned with Vault’s native metadata schema to avoid dual-maintenance overhead. Veeva’s platform ecosystem also includes Vault Link and other cross-system connectors that can propagate classification metadata across the broader data estate.

Box Governance addresses a specific but common gap: unstructured content that lives outside the validated document management environment — presentations, working documents, research files, and informal collaboration artifacts that nonetheless may contain information relevant to regulatory or AI use cases. Box Governance provides classification, retention, and legal hold capabilities for this content class, and Box’s AI features can be configured to respect classification boundaries established through the broader taxonomy.

Glean and Microsoft 365 Copilot are the leading enterprise AI search platforms for Horizon 1 deployment. Both platforms are designed to respect sensitivity labels and access control boundaries established through Purview and connected identity management systems, making them appropriate for deployment in environments where Horizon 1 classification work has been completed. Glean’s connector ecosystem is particularly broad, allowing it to index content from Veeva, Salesforce, ServiceNow, and dozens of other enterprise systems alongside Microsoft 365 content — a significant advantage for life sciences organizations with heterogeneous data estates.

Vertex AI, Azure AI Foundry, and the Glean Agent Platform represent the leading infrastructure options for Horizon 2 agentic AI deployment. These platforms provide the orchestration, tool integration, and governance capabilities required to deploy AI agents that can take actions across enterprise systems. However, it bears emphasis that the value of these platforms is entirely dependent on the quality of the classification metadata they can access. An agent deployed on Vertex AI or Azure AI Foundry against an unclassified or poorly classified data estate will produce unpredictable, ungovernable, and potentially non-compliant outputs. The platform is the engine; classification is the road.

The USDM Perspective

At USDM, we have spent considerable time over the past two years working through the practical implications of AI deployment in regulated life sciences environments — with clients ranging from early-stage biotechs preparing for their first regulatory submissions to global pharmaceutical companies managing data estates spanning decades of acquisition history and dozens of enterprise systems. What we have observed, consistently and across organization types, is that the companies making the most genuine progress toward AI capability are not the ones with the most sophisticated AI technology. They are the ones that made the deliberate, unglamorous decision to invest in data classification first.

This work is, without apology, boring. It does not generate the kind of excitement that a generative AI demonstration produces in a leadership offsite. It does not make for compelling vendor case studies or conference keynotes. It involves spreadsheets, stakeholder workshops, taxonomy debates, classification accuracy reviews, and DLP policy negotiations. It is the infrastructure work that nobody wants to fund until the AI project fails without it — at which point the cost of retrofitting classification onto an AI deployment in progress is approximately three times what it would have cost to build it correctly from the start.

Our perspective is that life sciences organizations owe it to themselves — and to the patients whose outcomes ultimately depend on the quality of their data — to treat data classification as a strategic investment rather than a compliance obligation. The organizations that get this right will be operating AI agents in their regulatory affairs, clinical operations, and quality management functions within three to five years. The organizations that defer this work will be watching from the outside as their competitors compress timelines, reduce submission costs, and improve signal detection in ways that were simply not possible without the classification infrastructure underneath.

The path forward is clear, even if it is not easy. Build the inventory. Design the taxonomy. Deploy the automated classifiers. Enforce the policies. Enrich progressively toward Horizon 2. And do not wait for the AI platform to be perfect before starting — because the platform will always be ready before the data is. If you are ready to have a substantive conversation about where your organization stands and what a realistic data classification roadmap looks like for your specific environment, we encourage you to contact our data strategy team.

Ready to build your data classification foundation?

USDM helps life sciences organizations move from data chaos to AI-ready infrastructure.

Frequently Asked Questions

Q: What is data classification in life sciences?

Data classification in life sciences is the systematic process of identifying, cataloging, and annotating enterprise data assets with structured metadata that describes their sensitivity, content type, regulatory status, and permissible uses. Unlike generic enterprise data classification, life sciences data classification must account for the specific requirements of GxP-regulated environments, including FDA 21 CFR Part 11 electronic records standards, ICH Good Clinical Practice guidelines, and jurisdiction-specific privacy regulations such as GDPR and HIPAA. A complete data classification framework for a life sciences organization will typically address sensitivity tiers, semantic document taxonomy, provenance and lineage attributes, access control policies, and — for organizations preparing for agentic AI — action permission designations that specify what automated systems may do with each class of content. Data classification is not a one-time project; it is an ongoing operational discipline that must evolve alongside the organization’s data estate and AI capabilities.

Q: Why is data classification required before AI deployment?

AI systems — whether search-oriented retrieval tools or autonomous agentic systems — operate on data, and the quality of their outputs is directly constrained by the quality of the metadata describing that data. Without classification, an AI system cannot determine whether a document is appropriate to retrieve for a given user, whether its contents are current and authoritative, whether it may be included in a regulatory filing, or whether acting on it requires human oversight. In regulated life sciences environments, these are not merely quality considerations — they are compliance requirements. An AI system that retrieves and summarizes a superseded clinical study protocol, or that drafts a regulatory submission incorporating invalidated safety data, creates both patient safety risk and regulatory liability. Data classification is the mechanism through which organizations establish the guardrails that make AI deployment both safe and effective. Deploying AI before completing foundational classification work is analogous to deploying a validated manufacturing process without completing the validation — technically possible in the short term, but fundamentally incompatible with the organization’s regulatory obligations.

Q: What is the difference between Horizon 1 and Horizon 2 data classification?

Horizon 1 data classification focuses on the foundational metadata required to deploy AI-powered search and knowledge retrieval tools: a complete data inventory, sensitivity labels (Public, Internal, Confidential, Restricted), access control enforcement, and data loss prevention policies. Horizon 1 enables organizations to deploy tools like Microsoft 365 Copilot and Glean with confidence that data boundaries are respected and that the right people can access the right information. Horizon 2 data classification builds on this foundation with the richer, more semantically complex metadata required for agentic AI systems: action permission tags specifying what operations agents may perform on each document type, semantic taxonomy identifying what each document is and what it contains, provenance and lineage metadata tracing data back to authoritative sources, and workflow eligibility tags indicating which automated processes a document may participate in. The distinction matters because organizations that build only Horizon 1 infrastructure can deploy AI search but will be unable to deploy autonomous agents safely — and the competitive advantage from agentic AI is substantially greater than from search alone.

Q: How long does enterprise data classification take for a life sciences company?

For a mid-sized life sciences organization — a company with 1,000 to 5,000 employees, multiple enterprise systems, and a significant legacy document estate — Horizon 1 data classification can typically be completed in 12 to 18 months with appropriate resourcing and organizational commitment. The most time-consuming elements are typically the initial discovery and inventory phase, which often reveals significantly more content volume than anticipated, and the sensitivity labeling of legacy content, which requires a combination of automated classification and human review. Horizon 2 enrichment, which adds the more complex metadata required for agentic AI, is an ongoing program rather than a bounded project; organizations should expect to spend 18 to 36 months building initial Horizon 2 capability for priority document types and workflows, with continuous enrichment thereafter. Factors that significantly accelerate timelines include strong executive sponsorship, pre-existing data governance programs, investment in automated classification tooling, and engagement of experienced implementation partners with life sciences-specific expertise. Factors that slow progress include organizational silos between IT and business functions, highly fragmented legacy data estates, and the absence of a defined classification taxonomy at project initiation.

Q: What platforms support GxP-compliant data classification?

The primary platforms supporting GxP-compliant data classification in life sciences are Microsoft Purview, Veeva Vault, and Box Governance, each serving distinct segments of the enterprise content landscape. Microsoft Purview provides sensitivity labeling, automated content classification, and data loss prevention enforcement across the Microsoft 365 ecosystem and connected repositories, and can be configured and validated to support 21 CFR Part 11 compliance requirements for electronic records. Veeva Vault, as the dominant GxP document management platform in pharma and biotech, provides native document type taxonomy and audit trail capabilities that are foundational to GxP-compliant classification for regulated content. Box Governance addresses unstructured content outside validated systems with classification, retention, and legal hold capabilities. For organizations seeking to extend classification metadata to AI search and agentic platforms, Glean, Microsoft 365 Copilot, and the Glean Agent Platform are designed to consume and respect classification metadata from these foundational systems. Any platform used in a GxP-regulated classification context must itself be subject to appropriate qualification and validation activities in accordance with the organization’s quality management system and applicable regulatory guidance.

Conclusion

The life sciences industry is at a genuine inflection point. The AI capabilities that will define competitive positioning over the next decade — autonomous regulatory submission workflows, AI-assisted pharmacovigilance, intelligent clinical operations, machine-speed quality management — are no longer speculative. They are being built today, by organizations that made the decision, often several years ago, to invest in the data infrastructure that makes them possible. That infrastructure is data classification: not as a compliance exercise, not as a one-time project, but as a sustained, strategically funded operational capability that progressively enriches the metadata layer of the enterprise data estate.

The two-horizon framework presented in this article provides a practical organizing principle for that investment. Horizon 1 — sensitivity labeling, data inventory, access enforcement, and AI search deployment — is achievable within 18 months and delivers meaningful returns in the form of improved knowledge access and reduced information risk. Horizon 2 — action permissions, semantic taxonomy, provenance metadata, and workflow eligibility classification — is a longer journey, measured in years rather than months, but it is the journey that leads to autonomous AI agents operating safely and compliantly in regulated environments. Organizations that pursue both horizons deliberately, with appropriate resourcing and governance, will find that their AI investments compound over time in ways that are simply not available to organizations that skipped the classification foundation.

The work is not glamorous. The spreadsheets are not exciting. The taxonomy debates are not the kind of thing that makes headlines. But the organizations that will be leading their sectors in AI capability five years from now are the ones starting this work today — and USDM is committed to being the partner that helps them get it right. Our data governance and AI strategy practices are designed specifically for the regulated life sciences environment, and our experience across pharma, biotech, medical device, and CRO/CDMO organizations means we understand both the regulatory constraints and the operational realities that make this work uniquely complex. The path forward starts with knowing what you have. Let us help you find out.