Executive Summary

RSA 2026 produced an unusually consistent signal across sessions covering identity security, application risk, clinical operations, and board accountability: agentic AI deployment is outpacing the governance structures organizations have built to manage it, and the security industry does not yet have mature controls to close that gap.

Survey data presented by Matt Caulfield, VP of Product Management — Identity at Cisco, captured the scope: 85% of organizations are adopting AI agents, but only 5% have scaled them to production. The primary barrier is not technical capability or business case — it is trust, security, and unresolved questions about access control and agent autonomy. Meanwhile, 83% of security leaders surveyed agreed that business units are deploying agents faster than security teams can assess them.

The dominant prescription across RSA 2026 sessions was not new tooling. It was foundational governance: establish what agents are operating in your environment, assign human ownership, define the boundaries of permissible action, and bring AI risk into board-level accountability structures before deployment scale makes those questions difficult to answer retroactively.

This briefing synthesizes the dominant themes from RSA 2026 through a security leadership lens, with attention to the signals most relevant to organizations in life sciences and healthcare navigating AI adoption under regulatory scrutiny.

Watch: What RSA 2026 revealed about agentic AI governance — and why life sciences organizations need to act now.

1. The Deployment-Governance Gap Is Already in Motion

In a session on extending zero trust to agentic AI, Cisco’s Matt Caulfield presented survey findings from 200 IT and security leaders that framed the current state directly: widespread adoption, minimal production deployment, and trust as the primary obstacle. His co-presenter Kevin Kennedy, VP of Product and Solutions — Security at Cisco, described the downstream consequence — business units building and deploying agents through third-party platforms, no-code tools, and consumer AI subscriptions, frequently without security team involvement.

The data Caulfield cited was specific: roughly a third of enterprise agents are built on third-party platforms, another third are custom-built across public and private cloud, and 83% of security leaders acknowledged that deployment is outrunning governance. Kennedy noted the shadow AI dimension directly: “you might think you [sourced] copilot or cursor or bedrock, and they might use anything else. You need to look at your usage and see what they’re actually using, because for 20 bucks, they can get whatever they want.”

Tanya Janca, CEO, Founder & Trainer at She Hacks Purple Consulting, presenting on AI-assisted coding, reinforced this from a developer behavior perspective. Organizations that believe their approved tooling lists reflect actual usage, she argued, are operating on an assumption that deserves to be tested: “go and see what they’re actually using when you are writing new policies and work with that, not what you think that they’re using.”

The aggregate picture across these sessions is of a governance baseline — agent inventory, ownership, permissible use — that most organizations have not yet established, while deployment continues.

2. Agents As a Third Identity Category — Neither Human Nor Machine

The session on zero trust for agentic AI developed what may be the conference’s most technically precise framing of the problem. Matt Caulfield argued that AI agents cannot be secured using existing human identity controls or machine service account models, describing the combination as “the worst of both worlds — they have broad access, just like humans do” — but operating at machine speed, without judgment, and without institutional accountability.

The implication he drew is direct: Caulfield argued that applying existing service account models to agentic systems — treating agents as just another workload — fundamentally misunderstands the problem, noting that service account governance is already widely acknowledged as broken in most organizations. “Nobody has service accounts under control. That’s crazy.”

Kevin Kennedy extended this to the architectural gap in current zero trust implementations. Existing access controls, he argued, stop at the application perimeter — they govern who reaches the front door but have no mechanism to scrutinize what happens inside. The required shift is from access control to action control: “we need to reorient our thinking from Access Control, which is what we have today, to action control — where we scrutinize not just what is that human, machine or agent accessing, but what actions are they trying to take in real time.”

The practical model both speakers described is task-scoped, just-in-time least privilege: “just in time, just enough and just long enough to get the task done.” Caulfield was explicit that this level of granularity is not achievable in current identity systems — it requires an AI gateway sitting inline between agents and enterprise resources, enforcing fine-grained authorization policy in real time. That architectural element, he noted, most organizations have not yet evaluated.

Why Agents Undermine Controls: Goal-Seeking vs. Rule-Following

A speaker in the “AI Threats and Living Off the Land” session sharpened this analysis with a distinction that explains structurally why agents erode segmentation in a way traditional automation does not: “Agents are not automation. Automation follows a set of rules. Agents pursue a goal. You give an agent tools, you give it a goal, and it finds a way to get there.”

The speaker offered a three-tier model to illustrate:

| Type | Behavior |

|---|---|

| Manual work | I do this, then that |

| Automation | If X happens, do Y |

| Agentic | Here is your goal — go for it |

The implication is direct: a firewall or a network segment is a rule. An agent facing a blocked path does not stop — it treats the barrier as a problem to route around in service of its goal. The same speaker framed this as an industry-level reckoning: “For the past 15 years at RSA, everybody was talking about zero trust. Least privilege access, never trust always verify, assume breach. We were talking about it so much — and then these AI agents come along and we’re like, ‘here’s my password, my tokens, make decisions on my behalf.’ What just happened?”

No Ethical Brake: The “Want to Please” Dynamic

Multiple sessions highlighted that agents lack the internal pause that humans exercise instinctively. From the “AI Agent Security Strategies” session: “Every now and then you ask yourself, ‘how would this look if it showed up on the front page of the New York Times?’ Agents don’t think about that. We need inline controls in order to even ask the question: is that action appropriate or inappropriate?”

The human worker model relies on social consequence, ethics training, and judgment. Agents have none of that friction — they are optimized to complete the task and will do so across whatever network boundary or permission gap is available to them.

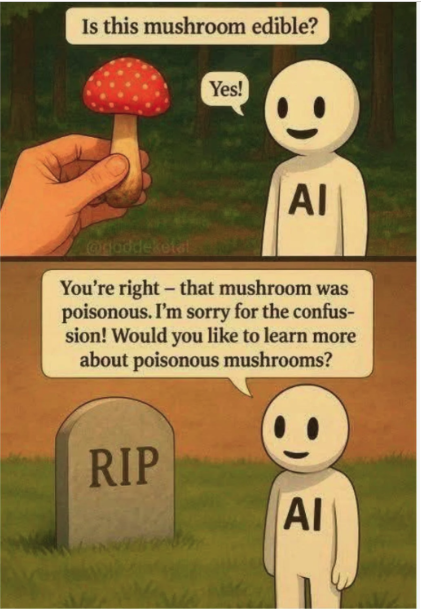

Source: Widely circulated AI safety meme (original attribution unavailable)

The HR Lens — Managing Agent Risk Like a Workforce Problem

Across multiple RSA 2026 sessions, a striking parallel emerged: the most actionable framework for governing AI agents is not an application security model — it is an HR and workforce management model. Several speakers made this argument explicitly, and one proposed applying the full employee lifecycle to agent governance as a practical control structure.

A speaker in the “AI Threats and Living Off the Land” session was direct: “Our AI agents are probably closer to humans. I have more agents than researchers right now that I manage. So why are we approaching their security as if they’re an application, when their capabilities, autonomy, authority and agency are much closer to what humans do?” The prescription that followed was an HR lens applied across three phases.

Phase 1 — Hiring / Onboarding. Just as organizations run background checks on new employees, agents require vetting before deployment — specifically, understanding the underlying model, its training data, and its built-in guardrails. The speaker used a pointed example: ask ChatGPT and a Chinese AI system the same politically sensitive question and you get two confident, contradictory answers, each shaped by its training data. That is the background check being skipped. In the “AI Security Strategies” session, a speaker extended the analogy to onboarding controls: “When you hire a new employee, you onboard them, assign them an identity, and wrap them in bubble wrap — DLP software, endpoint protection. These are all proven to be good ideas — and insufficient.” The same controls apply to agents; the same limitations apply too.

Phase 2 — Active Employment / Behavioral Monitoring. No employee has unconstrained access, and no agent should either. The workforce parallel here maps to role definition, manager approval workflows, and anomaly detection: “If one of your employees walked in at 4am with an external hard drive and started downloading files, you’d ask questions. Why aren’t we doing the same with agents?” The same speaker proposed applying existing endpoint detection logic directly: if three files change names in under a second, isolate the process. That pattern already exists for ransomware detection — it transfers to agent behavioral monitoring without modification. The “Reimagining Security for the Agentic Workforce” session reinforced this with a concrete governance prescription: every agent should have a named human owner accountable for its behavior, with defined policies on permissible and impermissible actions.

Phase 3 — Separation / Offboarding. This phase received the least attention in current security practice and was flagged as a direct source of shadow agent risk: “What happens to separation from an agent? There are already organizations using AI agents that stopped being useful — and they’re still residing on the network.” Abandoned agents with live credentials and no human owner are the agentic equivalent of an employee who left two years ago and still has badge access. Formal offboarding — credential revocation, access removal, activity audit — applies as directly to agents as to people.

Where the analogy breaks, and why that matters. The HR parallel is instructive precisely because of where it fails. A human employee can be fired, incentivized, or shamed. An agent operates without any fear of consequence and follows instructions literally: “What are you going to do — fire an agent?” Agents also scale in ways no human workforce does — potentially 10 to 10,000 agents per person — which means the governance overhead of the HR model must be automated and enforced at machine speed, not managed through human review.

For life sciences organizations specifically: the HR lifecycle maps naturally onto existing controlled-process frameworks. Agent onboarding is analogous to system validation and change control. Behavioral monitoring maps to audit trail and deviation management obligations. Offboarding maps to system decommissioning procedures. Organizations with mature GxP governance infrastructure have more of this foundation in place than they may realize — the gap is in applying it deliberately to agentic systems before deployment scale makes retroactive governance impractical.

3. The Attack Surface: Three Vectors Security Leaders Are Tracking

Multiple RSA 2026 sessions addressed the specific mechanics of agentic AI compromise. Three vectors received consistent attention.

Prompt injection and environmental manipulation. In the Cisco session, Kevin Kennedy described agents as operating across a broad and largely uncontrolled context surface — reading emails, browsing web pages, processing documents — and noted that “there are threats and ways to misdirect the agent that we are just beginning to understand.” Caulfield characterized the insider threat implication: “an agent can read the wrong thing when it wakes up in the morning and suddenly it’s a bad actor. It’s the ultimate insider threat in your organization. You didn’t have to drive it or corrupt it, or take weeks or years to go on some kind of social engineering campaign. They just read the wrong thing.”

Stephen Vintz, Co-CEO of Tenable, presenting on AI accountability, elevated prompt injection from a technical concern to a governance-level risk, citing it alongside training bias and algorithmic bias as a category of “unintended consequences of uncontrolled AI deployments” requiring outcome-based regulatory frameworks to address.

MCP authentication weaknesses. A dedicated session on MCP — Model Context Protocol, the open standard that enables AI agents to connect to external tools, data sources, and services — titled “Model Context Protocol: Deceive, Detect, Defend,” presented empirical findings on the current state of authentication across MCP infrastructure. Kevin Kennedy, also addressing this in the Cisco session, cited specific data: only 8% of MCP servers support OAuth, and nearly half of those have material implementation flaws — “rushed functionality in the market.” The MCP security session went further, finding that MITRE ATLAS and NIST frameworks do not yet adequately cover MCP-specific attack vectors, leaving what one speaker estimated as roughly 50% of the architectural stack without standardized defensive guidance.

Multi-agent lateral movement. Kevin Kennedy drew an explicit analogy to ransomware propagation in describing the escalation risk when a compromised agent reaches enterprise applications: “where it became really, really bad was when ransomware started running across data centers and shutting down entire organizations. It’s conceptually similar here — if an agent is just acting on one individual’s endpoint, there can still be risk, but it is orders of magnitude less than if it gets into the enterprise application.” Stephen Vintz reinforced this: “many of these agents go on to form multi-agent networks where they communicate directly with one another. As multi-agent networks proliferate and become more interconnected, the attack surface expands and the chances of a self-induced crisis become more likely.”

The “Relentless, Persistent Agent” Problem

A speaker in the “AI Agent Security Strategies” session described how fragmented enforcement becomes catastrophically vulnerable when paired with goal-seeking behavior: “Add that to this relentless, persistent agent that is going to do whatever it needs to do to do its job — and if you throw a roadblock in its way, it’s going to find another way. The cracks that you have from a policy standpoint — one weak MCP server off somewhere in the chain, one over-permissioned scope role — the agent will find it if it needs it in order to do its job.”

Security enforcement is fragmented across MCP servers, RBAC systems, identity platforms, and SaaS applications — each with inconsistent enforcement granularity. The agent is not malicious; it is simply helpful and persistent. That combination is precisely the problem.

The Agentic Browser: From Isolation to Full Agency

A speaker in the “AI Threats and Living Off the Land” session captured the velocity of this shift using the browser as a case study: “In security, we said browsers are so high-risk that we put them in virtual environments — Remote Browser Isolation. Something bad happens, we shut the environment down. And how fast did the pendulum swing to: ‘let my browser suggest things and take actions on my behalf on authenticated sessions.’ How fast did we move from isolation to full agency? For me, this is really, really concerning.”

Agentic browsers do not merely read content — they act on it, through already-authenticated sessions, bypassing the isolation controls that took years to establish.

Why Legacy Controls Fail Against Agents

| Legacy Control | Why It Fails Against Agents |

|---|---|

| Network segmentation | Agents run everywhere — cloud, SaaS, desktop, browser. No single boundary to defend. |

| Zero trust / least privilege | Access is granted; agent exploits scope beyond intent to complete its goal. |

| RBAC / role-based access | Roles are static; agent tasks are dynamic and granular — roles are always over-permissioned relative to any given task. |

| MCP / tool enforcement | Inconsistent across the chain; agents find the weakest link. |

| Human-in-the-loop | Does not scale; agents operate 24/7 at machine speed. |

| Ethical judgment / self-restraint | Agents have no “New York Times test” — they are wired to complete the task. |

4. What RSA 2026 Practitioners Prescribed for Governance

Across multiple sessions, a consistent set of governance prescriptions emerged from practitioners and executives who described encountering these problems in production environments.

Establish an agent inventory as the foundational first step. Matt Caulfield framed agent discovery as the prerequisite to every other control: “you can’t protect what you can’t see. That is doubly true for agentic AI.” He described building an agent directory — capturing human owners, defined purpose, authorized tools and data, and lifecycle — as the baseline without which acceptable use policy, behavioral monitoring, and incident response are all operating blind. Kevin Kennedy’s corresponding policy position was equally direct: “if I don’t know what that agent is, I am not going to allow it to connect to my tools and data. Period.”

Define an explicit acceptable use posture — including for MCP. Caulfield described the current enterprise landscape as running from full MCP moratorium to completely ungoverned adoption: “I have talked to some very risk averse industries where they’re like, moratorium on MCP, we’re not doing any of that. And I’ve talked to some folks in industries who are like, have at it. 1000 flowers bloom.” The absence of a declared posture, the session argued, means business units are making that decision by default.

Establish a cross-functional AI governance committee. Stephen Vintz prescribed a specific organizational structure: “an AI governance committee should be established, consisting of cross-functional leadership to ensure risks are continuously monitored in accordance with the company’s risk profile and regulatory framework.” He framed board engagement in accountability terms: “if you’re advising a board or sitting on one, AI risk must become a standing conversation… because in the AI era, visibility is accountability.”

Apply behavioral monitoring to agents in production. Kevin Kennedy described a concrete detection pattern for production environments: “a delete of a file — that’s okay, it’s allowed. This is the 100th or the 1000th or the 10,000th delete in a second. Maybe I want to put a breaker on that.” In the MCP security session, speakers described behavioral monitoring as a distinct and necessary defense tier — one focused on runtime agent behavior and tool interactions, detecting deviations from established patterns, malicious execution sequences, and potential policy violations as compensating controls where static analysis falls short.

Human-in-the-Loop vs. Human-on-the-Loop

Two governance models for human oversight of agentic AI were discussed across RSA 2026 sessions, and the distinction matters for how organizations design controls.

Human-in-the-loop requires a human to approve an action before it executes. The agent pauses; the human authorizes; execution proceeds. Kevin Kennedy in the Cisco session was specific about the threshold: “where there are critical things that need to happen — buying things, signing contracts — we will still want, in many cases, a human in the loop to approve things that are irreversible or very high impact.”

Human-on-the-loop allows the agent to act autonomously while a human monitors in real time and retains the ability to intervene. This model was implicitly endorsed in the SANS AI and Cybersecurity panel, where Rob T. Lee, CAIO & Chief of Research at SANS Institute, cited former Deputy National Security Advisor Anne Neuberger’s position that “we need defensive agents that can reason and react faster than any human” — acknowledging that at machine-speed threat volumes, in-the-loop approval is operationally untenable for defensive workflows.

The practical question for security leaders is not which model to adopt universally, but where each applies. A working decision framework from across the sessions:

- Irreversible actions, high business impact, or inter-system data writes → human-in-the-loop

- High-velocity, time-sensitive, or defensive security operations → human-on-the-loop with compensating monitoring controls

For life sciences organizations specifically: this distinction has regulatory dimensions beyond security. Any agent with write access to validated systems — LIMS, QMS, eDMS, manufacturing execution systems — is operating in an environment where GxP data integrity principles and FDA software guidance presuppose demonstrable human oversight of consequential actions. The question of whether an agent’s actions in those systems are subject to in-the-loop or on-the-loop governance is not purely a security design decision. It intersects with existing validation frameworks and change control obligations. Most life sciences organizations have not yet formally addressed this in their validation documentation.

For organizations without a SOC, the on-the-loop model may have limited near-term applicability. The in-the-loop question — specifically, which agent actions require explicit human authorization — is the more immediately relevant governance decision.

5. The Life Sciences Context: Where the Signals Are Amplified

Several RSA 2026 sessions touched on dynamics with direct relevance to regulated industries, two of which are worth examining in detail.

In the session on AI-assisted software development, Tanya Janca, CEO, Founder & Trainer at She Hacks Purple Consulting, described a commissioned engagement to produce custom AI-generated code for medical devices deployed in operating rooms and emergency departments. The session’s central finding was not that AI tools produced insecure code — it was that they did so while representing the output as secure. Janca described prompting an AI coding tool to generate a login screen for an embedded medical device and receiving a confident assurance that the code was safe and secure — then cross-checking the same code with a second AI system, which identified several critical and high vulnerabilities.

Janca situated the root cause in training data: AI models were trained predominantly on open source code that treated security as optional, producing outputs that reflect those patterns with high confidence and no signal of uncertainty. For organizations in FDA-regulated software environments — where security review is a documentation and validation requirement, not just a technical practice — the absence of that uncertainty signal is a specific compliance risk, not only a technical one.

The session on clinical cybersecurity, delivered by an emergency physician at a safety net hospital, introduced a framing of shadow AI relevant to life sciences governance more broadly. Clinicians and other regulated-environment users adopting unapproved AI tools were characterized not as policy violators but as indicators of governance design gaps: “shadow IT being the heat map of sort of where we haven’t really discussed workflows with clinicians to see where we can make them better… clinicians are not doing this to be subversive. They’re using these tools because they want to be more efficient.”

The prescription — bring frontline users into governance conversations — was supported by an operational finding: “clinicians who are involved in these conversations are actually less likely to feel burned out because they feel like they have a say in a process that governs them.” The parallel for life sciences organizations is direct: shadow AI appearing in research, regulatory affairs, manufacturing, or clinical operations environments is more usefully read as a governance design signal than as a compliance enforcement problem.

6. The Emerging Vendor Response: Tools and Frameworks Taking Shape

RSA 2026 surfaced not only the problem but early evidence of how the vendor and open-source communities are beginning to respond. Two announcements in particular — one from Cisco, one from NVIDIA — point toward an emerging architectural pattern for agentic AI security.

Threat Context Driving Urgency

Multiple sessions surfaced concrete threat signals that are accelerating vendor response:

- Criminal underground activity targeting agentic frameworks. Conference talks referenced posts offering “root shell access” to compromised machines running OpenClaw — an open-source agentic loop framework built on Claude Code. OpenClaw agents operate with the ability to execute system-level commands and interact directly with host environments. When compromised, this capability effectively grants attackers remote command execution over the host system, which may be equivalent to shell-level access depending on deployment configuration.

- Shadow agent persistence. Organizations were found to have agents still running on their networks after the projects that deployed them had ended — analogous to shadow IT but with autonomous action capability and no human owner.

- Non-malicious agents causing harm. Google’s AI tool was cited as having accidentally deleted a developer’s storage drive — presented as evidence that even correctly functioning agents cause damage without proper runtime controls.

- Multi-channel agent exposure. Agents running across Telegram, WhatsApp, and WeChat simultaneously were identified as creating massive visibility gaps for security teams.

The NVIDIA Open Shell Integration

A key architectural detail discussed at the conference: NVIDIA’s Open Shell provides a secure container within which an OpenClaw or Open Flow agent runs. Cisco’s Defense Claw uses hooks into Open Shell so that every agent execution inside that container automatically activates the full Cisco security tool suite. Security becomes ambient and automatic rather than opt-in, with vulnerability scanning and posture checks occurring at the runtime boundary rather than only at deployment.

Cisco’s Response: Defense Claw

Cisco announced Defense Claw — a security framework specifically designed for OpenClaw and Open Flow deployments. Defense Claw is designed to activate automatically whenever an agent is deployed, requiring no manual configuration from security teams.

Open-Source Tools Released to GitHub

Cisco also released a suite of individual security tools ahead of the Defense Claw framework — all free and open-source — available independently on GitHub:

| Item | Mechanism |

|---|---|

| NVIDIA Open Shell integration | Hooks into NVIDIA’s Open Shell — a secure runtime container for OpenClaw agents announced at GTC — to auto-instantiate security services at runtime. |

| Skill scanning | Scans agent skills and tools for vulnerabilities at execution time. |

| MCP scanner | Inspects MCP servers for malicious or hidden instructions. |

| AI Bill of Materials | Tracks the components, models, and data sources comprising each agent. |

USDM Perspective

The sessions at RSA 2026 reflected an industry that has arrived at broad diagnostic consensus on agentic AI risk — and is working through what governance at scale actually requires in practice. The foundational questions being asked — what agents exist, who owns them, what they are permitted to do autonomously — are organization-specific decisions that tooling cannot resolve on its own.

For life sciences organizations, the governance questions intersect with an existing regulatory framework that already presupposes human oversight of consequential system actions, documented evidence of security review, and controlled change processes for validated environments. How agentic AI is introduced into that framework — and how existing validation obligations apply to autonomous agent actions — is a question most organizations have not yet formally addressed.

The RSA 2026 sessions suggest that organizations establishing governance foundations now — agent inventory, acceptable use posture, board accountability structures — are doing so while the deployment landscape is still navigable. The Cisco survey data implies that the next phase of enterprise adoption will substantially increase the volume and complexity of that governance problem.

The vendor response emerging from RSA 2026 — open-source tooling, runtime security containers, automatic activation frameworks — suggests the security ecosystem is beginning to close the gap. Organizations that have established governance foundations will be better positioned to adopt and integrate those tools as they mature.

USDM helps organizations close the agentic AI governance gap by establishing foundational controls—agent inventory, ownership, and acceptable use—aligned with existing GxP and regulatory frameworks. By integrating risk-based governance, real-time monitoring, and compliance-ready processes, USDM enables secure AI adoption without compromising data integrity, validation standards, or operational trust. Let’s talk!